Welcome, AI enthusiasts

OpenAI, Anthropic, and Google are working together to stop Chinese rivals from copying their most advanced models using clever shortcuts like distillation. It’s a new kind of tech alliance, and a sign that the race to protect AI innovation is heating up. Let’s dive in!

In today’s insights:

OpenAI, Anthropic & Google team up to stop Chinese rivals

Anthropic Launches Project Glasswing to Tame Its Most Dangerous Model

OpenAI's Blueprint for the AI Economy

Read time: 4 minutes

LATEST DEVELOPMENTS

U.S. AI GIANTS

🤝 OpenAI, Anthropic & Google team up to stop Chinese rivals

Evolving AI: OpenAI, Anthropic, and Google are joining forces to stop Chinese rivals from cloning their most advanced AI models through unauthorized distillation.

Key Points:

The three rivals are sharing intelligence through the Frontier Model Forum to detect and block adversarial distillation attempts

Anthropic has publicly named DeepSeek, Moonshot, and MiniMax as companies that extracted capabilities from its Claude model

US officials estimate these unauthorized copying practices cost American AI labs billions in lost revenue each year

Details:

The three companies are sharing information through the Frontier Model Forum to detect adversarial distillation, a technique where outputs from an existing AI model are used to train a cheaper copycat. Chinese developers repeatedly query systems like ChatGPT, Claude, or Gemini then use the outputs to train competing models at far lower cost.

Distillation is a standard technique where a large "teacher" model trains a smaller "student" model. The problem is adversarial distillation, where outside labs hammer US models with automated prompts to clone core behavior without investing in original training. To fight back, the companies are developing technologies to identify abnormal traffic patterns that signal automated cloning attempts. Anthropic went furthest publicly, blocking Chinese-controlled companies from using Claude altogether and warning that the problem poses a national security risk beyond any single company.

Why It Matters:

This collaboration is notable because OpenAI, Google, and Anthropic are fierce commercial rivals, yet the threat from adversarial distillation is serious enough to push them into a formal intelligence-sharing arrangement. The concern goes beyond lost revenue. US AI firms warn that copying without safety safeguards could lead to powerful models being deployed with no built-in protections, increasing the risk of misuse. Besides that, if Chinese labs can replicate frontier capabilities at a fraction of the cost, it undermines the massive investment Western companies have poured into original research.

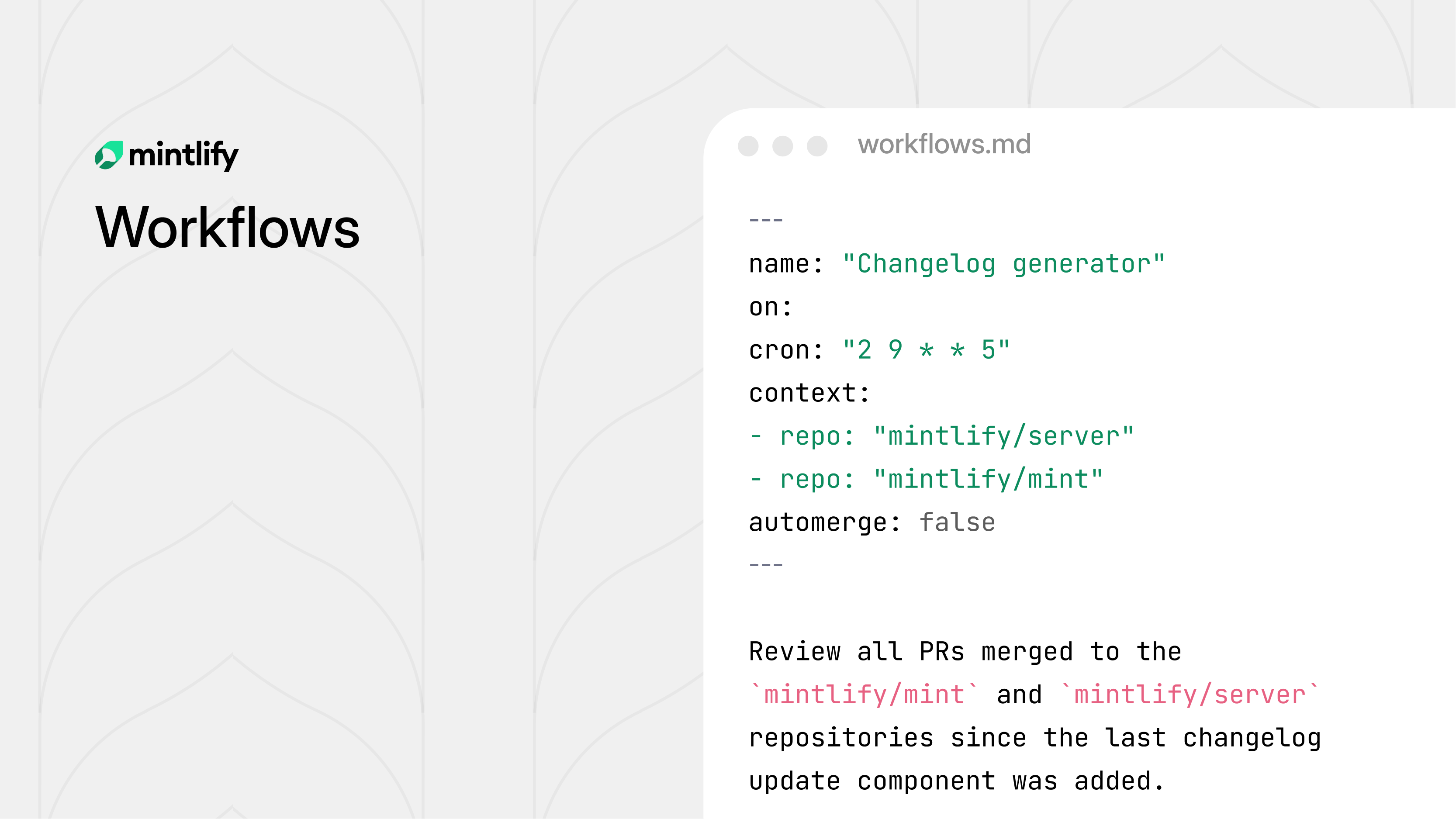

Stop Chasing Docs. Automate Them.

Docs piling up faster than you can write them? Same.

Every team knows the feeling — product ships, docs don't. Changelogs get forgotten. Style violations quietly accumulate. Broken links go unnoticed for months.

Mintlify's new Workflows feature fixes this. Define automation rules, and the agent handles the recurring maintenance work for you — on your schedule, by your rules.

Draft docs when a PR merges. Generate changelogs every Friday. Run a style audit on every push. Flag translation lag before it becomes a problem. Each workflow is version controlled, fully configurable, and fits into your existing review process.

You decide when it runs, what it checks, and whether changes get committed directly or opened as a pull request for review.

The result: documentation that actually keeps up with your product, without someone manually chasing it down.

Evolving AI: Anthropic has unveiled Project Glasswing, a sweeping industry coalition built around its new Mythos Preview model and its unprecedented ability to find and exploit software vulnerabilities.

Key Points:

Mythos Preview has already found thousands of high-severity vulnerabilities entirely autonomously, without human steering.

Anthropic is committing up to $100M in usage credits for Mythos Preview across Project Glasswing, plus $4M in direct donations to open-source security organizations.

Anthropic says it may be only six to eighteen months before other AI companies release models with comparable capabilities.

Details:

Project Glasswing brings together AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks in an effort to secure the world's most critical software. The initiative was formed around a stark finding: AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

The model's real-world results are striking. Mythos Preview found a 27-year-old vulnerability in OpenBSD — one of the most security-hardened operating systems in the world — that allowed an attacker to remotely crash any machine just by connecting to it. It also discovered a 16-year-old flaw in FFmpeg in a line of code that automated testing tools had hit five million times without catching the problem. Anthropic says the model will not be made generally available. The company plans to launch new safeguards with an upcoming Claude Opus model first, allowing it to improve and refine them with a model that does not pose the same level of risk as Mythos Preview.

Why It Matters:

Project Glasswing is an unusual move: a company openly admitting its own model is too dangerous to release and building a defensive coalition before it does. Anthropic acknowledges that these powerful cyber capabilities could be used to exploit flaws in the world's most critical software and could make cyberattacks far more frequent and destructive. The offset strategy — give defenders early access while safeguards are built — is logical but not guaranteed to work. Behind Mythos is the next OpenAI model, and the next Google Gemini, and a few months behind them are open-source models, meaning the window for defenders to get ahead is narrow. Whether a 12-company coalition can outpace a global wave of increasingly capable models is the defining question of this initiative.

The Future of AI in Marketing. Your Shortcut to Smarter, Faster Marketing.

This guide distills 10 AI strategies from industry leaders that are transforming marketing.

Learn how HubSpot's engineering team achieved 15-20% productivity gains with AI

Learn how AI-driven emails achieved 94% higher conversion rates

Discover 7 ways to enhance your marketing strategy with AI.

Evolving AI: OpenAI has released a set of economic policy proposals for navigating the rise of superintelligent AI.

Key Points:

OpenAI is calling for a Public Wealth Fund to give all Americans a stake in AI-driven growth, not just investors.

The company floats a robot tax and a shift from taxing labor to taxing capital as AI erodes the traditional payroll tax base.

A subsidized four-day workweek is on the table but critics note the plan still leaves displaced workers behind.

Details:

OpenAI has published a policy framework outlining how wealth and work could be restructured in what it calls the "intelligence age." The proposals blend progressive mechanisms like expanded safety nets with a market-driven economic model. OpenAI warns that AI-driven growth could hollow out the tax base funding Social Security, Medicaid, and housing assistance as corporate profits expand and reliance on labor income shrinks. To counter this the company suggests higher taxes on corporate income and capital gains at the top as well as a potential robot tax — an idea Bill Gates first floated in 2017.

OpenAI also proposes creating a Public Wealth Fund to give Americans an automatic public stake in AI companies and infrastructure with returns distributed directly to citizens. On the labor side it proposes a subsidized four-day workweek with no pay cut along with portable benefit accounts that travel with workers across jobs. However OpenAI frames many benefits as corporate responsibilities rather than government ones, which means workers whose jobs are eliminated by automation could lose these protections entirely. The document also addresses AI safety calling for containment plans for dangerous systems new oversight bodies and safeguards against high-risk uses like cyberattacks and bioweapons.

Why It Matters:

OpenAI (now an $852 billion for-profit company after starting as a nonprofit) is framing these proposals as a call for a "new industrial policy agenda" to ensure superintelligence benefits everyone. But the tension is hard to ignore. A company that profits directly from automation is now designing the safety net for those it displaces. The proposals carry real weight as a signal to policymakers and investors but they are ultimately a wish list not a binding commitment. As AI reshapes labor markets the question isn't just what safety nets are proposed, it's who actually builds them and who they leave out.

This video from Anthropic breaks down why AI can feel emotional, even though it isn’t. New research shows models develop internal “emotion-like” patterns that shape how they respond, adapt tone, and even make decisions. It’s a simple look at why AI behavior can feel surprisingly human, and what’s actually happening under the hood.

QUICK HITS

🤝 Anthropic inks deal with Google, Broadcom to secure AI chips; tops $30 billion run-rate.

🚗 Uber scales on AWS to help power millions of daily trips and train its AI models.

🤖 Researchers build a robotic swarm with no electronics, no batteries and no brains.

🗺️ Google Maps Adds AI-Powered Photo Captions via Gemini.

📈 Trending AI Tools

🛠️ Base44 - Build fully-functional apps in minutes with just your words, no coding needed*

🧠 Brainly - AI learning companion

💬 Character AI - Chatbot platform to converse with AI-driven personalities, ranging from fictional characters to historical figures and celebrities

✍️ Sudowrite – Fiction-focused AI writing tool to boost creativity

*partner link