In partnership with

Welcome, AI enthusiasts

White-collar jobs were supposed to be the safe ones, but Anthropic’s latest research suggests that assumption may not hold for much longer. The company just mapped which jobs AI can already perform today and which ones could be automated next. Let’s dive in!

In today’s insights:

Anthropic reveals the Jobs Most at Risk

OpenAI hardware leader resigns after deal with Pentagon

Cursor’s AI agents now run hundreds of coding tasks per hour

Read time: 4 minutes

LATEST DEVELOPMENTS

ANTHROPIC

⚠️ Anthropic Reveals the Jobs Most at Risk

Evolving AI: Anthropic just released a detailed study mapping which jobs AI can do today and which it could soon automate. The gap between those two numbers may shape the next phase of the labor market.

Key Points:

AI can theoretically perform most tasks in fields like finance, law, programming, and office administration.

Roles like programmers, financial analysts, customer service agents, and data entry workers show the highest exposure.

The workers most affected tend to be highly educated and higher paid.

Details:

Anthropic researchers analyzed real workplace usage of Claude and compared it with what large language models are capable of doing across different occupations. The gap is large. In computer and math roles, AI could theoretically handle around 94% of tasks, yet real-world usage currently covers about 33%. Office and administrative roles show a similar pattern, where AI could perform roughly 90% of tasks but is used for far less today. The study finds that workers most exposed to AI are often highly educated and well paid. Roles like programmers, financial analysts, customer service agents, and data entry workers show the highest exposure. Jobs requiring physical presence such as cooks, mechanics, and bartenders show little to no exposure.

Why It Matters:

Anthropic’s new research says AI use at work is still well below what the models can technically handle, and the most exposed roles skew toward higher-paid, degree-heavy knowledge work, with early signs showing up more in slower hiring than mass layoffs. That makes this less about a sudden jobs apocalypse and more about a quiet squeeze where fewer juniors get hired, fewer routine tasks stay human, and white-collar work starts changing from the bottom up.

TOGETHER WITH DATADOG

🔐 AI Security Best Practices

Evolving AI: Your Guide to Building Secure AI Applications.

As AI adoption accelerates, new attack surfaces are emerging across infrastructure, supply chains, and model interfaces. Datadog’s AI Security Best Practices Guide breaks down how to secure:

The underlying components that host and run AI applications

The software and data that an AI application uses to operate

The entry points and business logic that enable a user to interact with an AI application

This guide provides actionable strategies to help teams strengthen AI security without slowing innovation.

Evolving AI: OpenAI’s head of hardware has resigned after raising concerns about the company’s new Pentagon partnership.

Key Points:

OpenAI hardware lead Caitlin Kalinowski stepped down over concerns about the Pentagon deal.

She warned that AI deployment on classified defense networks was announced too quickly.

OpenAI says its policies still block domestic surveillance and autonomous weapons.

Details:

Caitlin Kalinowski, who oversaw hardware at OpenAI, resigned after the company announced a deal involving the U.S. Department of Defense. She said the agreement to deploy OpenAI models on the Pentagon’s classified cloud networks was announced before clear safeguards were defined. In a post on X, Kalinowski said AI will play a role in national security but warned that issues such as surveillance without judicial oversight or lethal autonomy without human approval deserved deeper debate. OpenAI responded that its policies still prohibit domestic surveillance and autonomous weapons. The company said the Pentagon agreement includes safeguards and that discussions with employees, policymakers, and civil groups will continue.

Why It Matters:

This story gets bigger when you zoom out. Over the past year, the real fight in AI has shifted from who has the best model to who gets to control where that model ends up, and defense is now right in the middle of it. OpenAI’s Pentagon deal came just days after Anthropic drew a harder line over military guardrails, and Kalinowski’s exit shows these debates are no longer just happening outside the labs, they’re happening inside them too. That matters to anyone using AI, because once these systems move from public chatbots into state infrastructure, the argument stops being about cool features and starts being about power, oversight, and who gets to say no.

🤔 How would you like to learn AI beyond the newsletter?

Evolving AI: We’re exploring new ways to help you build real AI skills. Beyond reading the news, what formats would you find most useful for learning and applying AI in your work?

Click the option you’d be most interested in below.

- Live, hands-on workshops on using AI across sales, marketing, and operations

- Quarterly workshop breaking down the most important AI developments and new use cases businesses

- Online AI cohort lasting 2 to 4 months

- 1 on 1 advisory calls on AI integration for companies

- Guides, hands-on lives + exclusive community for the 1% in AI

Evolving AI: Cursor is pushing deeper into agentic coding, with AI systems now running hundreds of automated engineering tasks every hour.

Key Points:

Cursor runs hundreds of automated workflows per hour across engineering systems.

AI agents respond to incidents and analyze server logs through direct integrations.

Cursor’s annual revenue has surged past $2B as demand for AI coding tools grows.

Details:

Cursor’s internal automation system now handles far more than code review. PagerDuty incidents can automatically trigger an AI agent that inspects server logs through MCP connections to diagnose issues. Other agents track changes across the company’s codebase and post weekly summaries to Slack. According to the team, the key shift is automation itself. Tasks engineers could start manually now run continuously in the background.

Why It Matters:

Cursor’s AI coding agents are starting to watch systems, react to incidents, summarize work, and keep moving without someone constantly steering them. That lines up with where the market is heading, with Cursor launching always-on automations as its revenue reportedly passed $2 billion, while OpenAI and Anthropic both pushed further into agentic coding in recent weeks. Software teams are getting used to AI handling parts of the job that used to require human attention every single time, and that changes what engineering work even looks like from here.

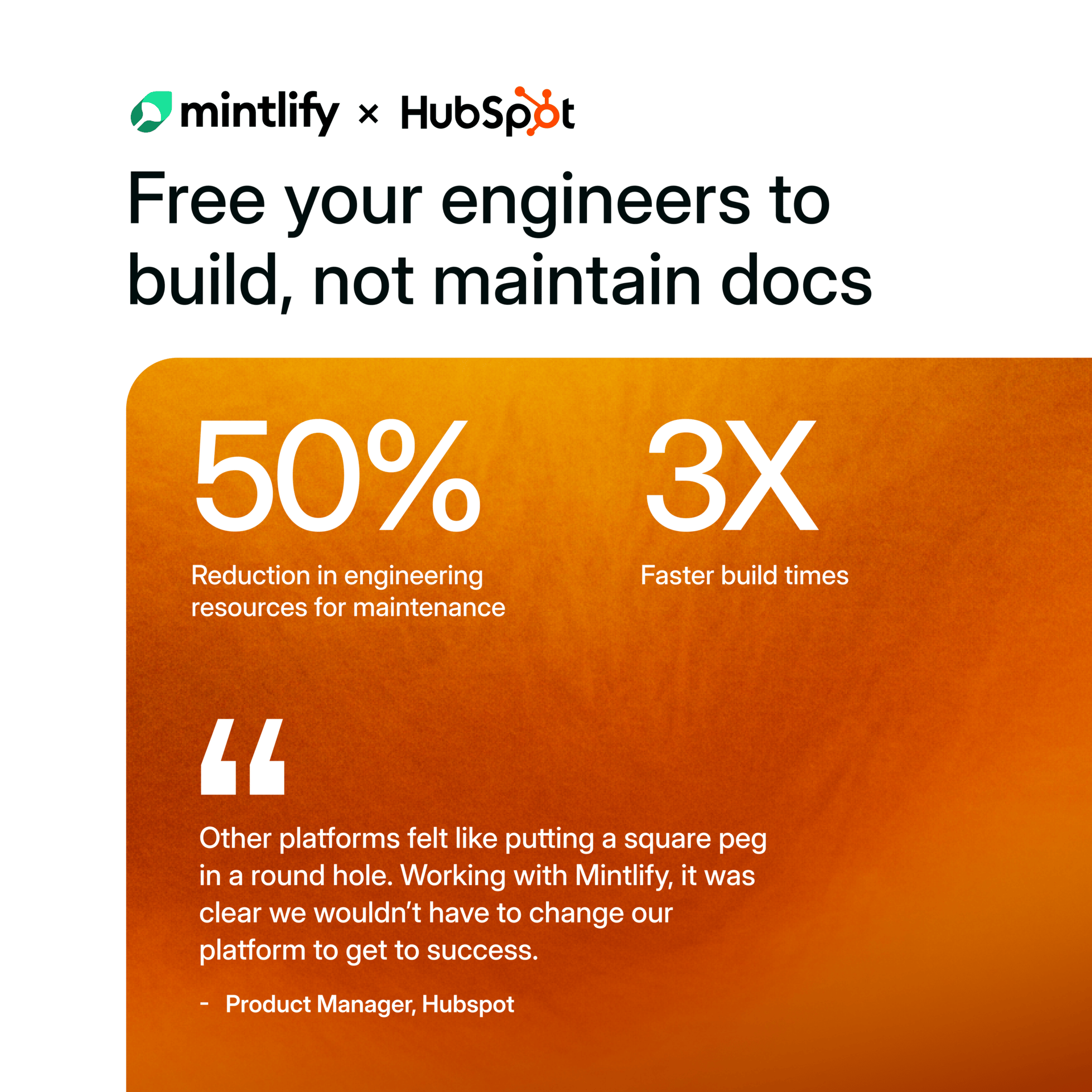

See Why HubSpot Chose Mintlify for Docs

HubSpot switched to Mintlify and saw 3x faster builds with 50% fewer eng resources. Beautiful, AI-native documentation that scales with your product — no custom infrastructure required.

QUICK HITS

🧠 Chinese start-up DeepSeek teams with Tencent, HKU on AI tool to sharpen 3D design.

🪖 ‘It means missile defence on datacentres’: drone strikes raise doubts over Gulf as AI superpower.

🔥 Bay Area high school students develop AI-powered system to detect, suppress wildfires.

🧪 Large AI models can speed catalyst discovery by predicting performance before synthesis.

🏗️ Oracle and OpenAI drop Texas data center expansion plan, Bloomberg News reports.

🎬 AI-generated Iran war videos surge as creators use new tech to cash in.

📈 Trending AI Tools

🚀 Sanebox - AI-Powered Inbox Assistant which automatically filters out unimportant emails*

🔐 Lumo – Private AI assistant that runs fully on your device

🛠️ Stateset.io - Build faster, autonomous commerce operations

🎨 Recraft – Generate clean, styled AI images with text support

*partner link